Why most planetary civilizations collapse

I didn’t get into video games until I was in my 40s. Oddly enough, it was a historian who triggered my interest. Niall Ferguson, the bestselling author, columnist, TV personality and Stanford professor, penned a 2006 New York Magazine piece, “How to Win a War,” that persuasively extolled the virtues of video games as tools for learning about history. He was particularly impressed by a certain turn-based PC strategy game that purported to model World War II—playing it, he said, had seriously challenged some of his own beliefs about the war.

I was not as impressed when I played that particular game, and later a more sophisticated competitor. The limitations of consumer-level computers and developer teams meant that these games simply couldn’t model the dynamics of the WW2-era world very well. However, even at that very modest level of simulation, the experience of replaying a historical period again and again, for dozens to hundreds of playthroughs, did prompt some thoughts about history in general.

One was simply that replaying a given stretch of history, which is to say, generating one variant history after another, has the effect of diminishing the significance of any of those variants. Naturally, in the highly abstracted milieu of a video game, one expects to be far less sensitive to details than one would be in real life. But I noticed that I became progressively desensitized to the details of the real-life WW2 as well: they seemed less interesting and meaningful.

To put it another way, my picture of this period of history was no longer formed from one clear image-capture, but from many—and in that multiple exposure, so to speak, most details were nonrecurring; they therefore tended to fade away as the number of exposures grew.

Would real-life history look different each time if we could re-run it from the same initial starting point? It absolutely would. Even one modern country is an enormously complex and nonlinear system—it will always vary significantly in how it runs from the same starting conditions, and the details of its course will be hard to predict very far in advance. (Think of how hard it is for us to foresee the course of a much simpler nonlinear system, the weather.)

Even so, we almost never think of history in this way. Experience encourages us instead to think of any historical episode as a singular phenomenon—one unique block of spacetime, never to be repeated—and that in turn leads us to frame any history as a sets of events linked by cause-effect relationships. Typically, we also try to draw big lessons from it all: the “lessons of history.” By contrast, when we have the ability to simulate replays of that block of spacetime again and again, seeing how things play out differently each time, it makes the inherently probabilistic nature of history stand out much more sharply. We are, in effect, forced to face a reality we normally wouldn’t acknowledge.

To illustrate again with an extreme example: Suppose one had a large bucket filled with a million marbles, each with its own identifying number, and suddenly dumped them onto some perfectly flat, expansive surface—and recorded precisely how they all bounced and rolled and reached some final arrangement. To the average person, that “history” of the marbles wouldn’t be particularly interesting, would it? The average person would understand intuitively that this marble-history was basically random, would look different in every re-run, and had nothing to teach, other than that marbles reliably obey known laws of mechanics. For that reason, writing a detailed History of the Marbles—or worse, having a dozen marble historians write their own competing tomes—would be absurd. Possibly such histories would be of interest to marbles, who might be curious about all the individual collisions that had brought them to their present positions. But to beings capable of a wider perspective, a history of the marbles would seem pointless—a measuring of statistical noise, as mathematicians would say.

*

Apropos of all that, at some point in my WW2-gaming sojourns I came up with a weird thought-experiment:

Suppose the virtual soldiers and citizens populating any given playthrough had human-like feelings, and regarded that playthrough—their playthrough—as the only one that had ever happened? What would these virtual people do if I, as the Player-God above them, suddenly revealed to them the true nature of their existence—in other words, revealed their “history” as but one chance-ridden playthrough among many?

They would despair, wouldn’t they? Not only at the revelation that their existence was a mere simulation, but also in the recognition that it was merely one of many variant, stochastically determined existences—one semi-random timeline among thousands, or really billions considering the wider universe of players with their separate copies of the game. They would see that, even as a simulation, their existence was effectively meaningless in the grand scheme of things.

Someday, computer games may be invented that not only simulate human events with a high degree of complexity, but also, via the right hardware, imbue their human-like characters with some degree of consciousness. Given the situation of these simulated humans, aware that they are trapped in worlds of no meaning or consequence, we as godlike players will feel sorry for them. However, the sufferings of our virtual creatures should be the least of our worries at that point—for by then we should have recognized that, as creatures of no consequence ourselves, we are in the same damned boat.

*

Can that be true? Is what you or I experience as “real life” merely one probabilistically determined playthrough among an infinitude of them?

The short answer is: very likely yes. And this is arguably the most important revelation—or, if you like, compelling theory—produced by science to date. Moreover, the idea I propose here is that any human civilization capable of grasping this true nature of our reality will eventually enter a state of deep and chronic despair, which perhaps can end only in human extinction.

This putative process of discovery and despair has an interesting, foreshadowing parallel in the most famous Western account of human origin, that of Adam and Eve in the Garden of Eden. For we are, with our science, compulsively eating a forbidden, toxic fruit (of the Tree of Knowledge) and are thereby, in effect, exiling ourselves from the lush, blissfully ignorant existence we briefly had.

And this may not be just a human affliction. It may be one that always strikes species once they reach a certain level of technical and scientific advancement. If so, then plausibly it has already extinguished most of the smart species across the universe, and has made the rest avoidant lest they transmit to us truths we cannot handle. This would explain the paradox—“Fermi’s Paradox”—that the universe probably has incubated trillions upon trillions of alien civilizations, yet the latter’s visits to us appear to have been relatively few and furtive.

*

Science, as we know it, is a very recent development. Broadly speaking, it is one of the fruits of the Neolithic Revolution, which began in the Eastern Mediterranean about 15,000 years ago, and by about 1000 A.D. had spread to almost every human society. This major shift in the human lifeway, from nomadism to farming and settlement-building, triggered a rapid, self-catalyzing increase in the scale and complexity of our societies, and the development of many new institutions. Science, however, was one of the slowest to emerge—and as an ongoing, global institution, dominant over magic and religion, has existed for only about a century and a half.

The progress of science has been bittersweet. On the one hand, it has led to better living standards through better knowledge and technology—e.g., better crop yields, better sanitation, better medicines, and a vastly better understanding and command of our environment. On the other hand, it has relentlessly belied man’s instinctive, high opinion of himself as a special creature of God, “made in His image.”

One of the earliest and most famous examples of this type of psychologically problematic scientific knowledge was the idea (introduced by Copernicus in 1543, and later refined and popularized by Kepler and Galileo), that our planet is not at the center of the universe. It took hundreds of years and considerable technical developments in astronomy for this painful truth that the universe does not revolve around us to be accepted. But in a sense, we are still struggling to cope with the implications. If we are not situated centrally in the universe, how could it have been made specifically for us, as our religions have led us to believe? A cosmology that placed us in one wispy spiral arm of one nondescript galaxy among trillions of galaxies might have been an important step forward for our science—but it was a giant leap downward for our self-image.

Then, of course, there was Darwin. Humans as mere animals, evolutionary cousins of apes? Impossible! The Church resisted that theory as it had resisted Galileo and Copernicus. But by Darwin’s time, science was much stronger, the Church much weaker, and within only a few decades, serious opposition to the theory of evolution by natural selection started to fade away.

It was also becoming clear, by then, that Earth couldn’t have been around for only a few thousand years, as accounts such as Genesis implied. Empowered by the discovery of radioactivity and radioactive decay, geologists by the mid-1920s understood that Earth was formed billions of years ago. This implied that we, H. sapiens, are merely an incidental and very recently developed addition to our planet’s fauna. In fact, many paleontologists now suspect that, had that asteroid not hit our planet about 65 million years ago, largely wiping out the then-dominant dinosaurs, tool-making primates like us might never have evolved.

Since the end of the 1900s, cosmologists generally have been in agreement that our observable universe has existed for roughly ten billion years before our solar system was even formed. That means that humans are almost certainly latecomers to the higher intelligence club—and may be as primitive and uncomprehending, in relation to truly advanced species, as ants or amoebas are to us.

All this points to the conclusion that a God of the Universe, if anything like Him exists, has no special interest in humans; and, moreover, that all human “meaning” and “significance” is strictly local—strictly confined to our tiny speck of reality.

*

Probably like most people who grew up in the latter half of the 20th century, I’ve tended to react to these scientific revelations by ignoring them. To the extent that I did think about them, in my younger years, I assumed with vague optimism that humans someday, through better technology, could spread from one star system to another, and so on until they establish their universality, perhaps ultimately melding with whatever force or entity made the universe. I think it’s fair to say that a lot of other people, including prominent advocates of space exploration, still think the same way.

I now see such optimism as a form of denial—a denial that is going to be harder and harder to maintain, as time goes on and we humans are increasingly confronted with the nature of our reality.

How we understand that reality is something that I expect will undergo various elaborations in the coming decades. But it should already be apparent that the idea we could ever “conquer the universe,” or in any way escape the utter insignificance of our existence, is naïve.

The most obvious (though not even the worst) part of the problem is that the universe is just unmanageably vast: larger than we can ever observe, expanding faster than light, and very likely infinite—which would mean that the human realm or contribution, in relation to the whole, could never be more than infinitesimal. This idea that space is effectively infinite the physicist and cosmology popularizer Brian Greene has described as “consistent with all observations and . . . part of the cosmological model favored by many physicists and astronomers.”

The human mind is not really adapted for contemplating infinities, but as Greene has pointed out, a truly infinite universe would contain, at any moment, infinite numbers of worlds identical to ours, some moving through time precisely as ours does, others with variations—in fact, all possible variations.

Again, compared to the whole of this Infinite Universe, and, we might also say, in the eyes of its Creator, the histories of individual worlds within it, along with their systems of morality and meaning, should be of infinitesimal significance. If we could take a God’s-eye view, zooming out from our planet to encompass our whole galaxy, and then galaxy clusters, and clusters of clusters, we would see the histories of individual worlds much as the video game player sees our world: less as sets of interlinked events, and more as manifestations of a broader, stochastic process, whose function is essentially only to ink over the space of possibility.

Contemporary physics, specifically quantum mechanics, delivers us to an even colder, darker destination. Quantum mechanics has at its core an equation, the Schrödinger wave equation, that implies a weird multiplicity of states for any given quantum-scale particle (an electron, for example) traveling through time. Physicists in the early years of quantum theory clung to the belief that these multiple states somehow probabilistically “collapse” to one state whenever one tries to observe the particle with a measuring device. However, in the past few decades the field basically has abandoned that rather hand-waving interpretation, mostly in favor of a simpler, more parsimonious one: that the multiple possible states a particle can be observed to have are all, in a sense, real.

In other words, these alternate states represent multiple actual particles existing in different “worlds” or “universes.” Thus, a physicist recording the impact of one particular state of an electron has, at that moment, otherwise identical counterparts in otherwise identical alternate universes who record the impacts of all the other states.

The reality implied by this interpretation—now called the Many Worlds Interpretation (MWI)—encompasses not just one very big universe but, rather, an infinite number of them, a “multiverse,” across which everything that can happen does happen. There is a perfection here that, at least in a technical sense, should impress those who always believed Creation would be flawless and complete.

Of course, from the usual sentimental human perspective, MWI looks bizarre and horrifying. Even so, its superior simplicity and parsimony, as a way of thinking about quantum phenomena, has enabled it to survive and spread despite its implications—which physicists don’t “like” any more than you or I do.

As Greene has noted:

I find it both curious and compelling that numerous developments in physics, if followed sufficiently far, bump into some variation on the parallel-universe theme.

*

“The multiverse will drive you crazy if you really think about how it affects your life, and I can’t live like that,” the philosopher of physics and MWI theorist Simon Saunders once told a reporter. “I’ll just accept [it] and then think about something else, to save my sanity.”

Is thinking about something else a viable strategy to escape the psychological consequences of modern cosmology?

Conceivably it is, up to a point. Humans evolved with basic, powerful drives towards survival and procreation, and even religiosity; they thus probably have, on average, a significant innate resistance to nihilist worldviews. Even now, well into the third millennium A.D., most of the human population professes belief in one religion or another. Also, obviously, the average person has no deep understanding of, or interest in, MWI or other modern cosmological theories.

Yet the things we do learn and think about ultimately affect our behavior, if only subconsciously. One doesn’t have to be a philosopher or a psychologist to understand—to take another extreme example—that if we all knew our solar system would be obliterated within a year, making it obvious that our existence was and always had been inconsequential, enough of us would fall into despair that our societies would start to disintegrate immediately.

I think the reason we’ve largely been able, so far, to resist the toxic implications of modern cosmology is simply that we haven’t been forced to confront them. But that situation is changing.

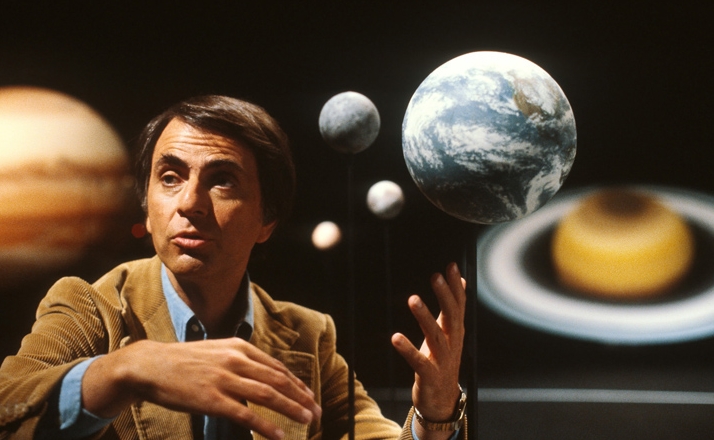

When I was growing up in the 1970s and early 80s, cosmology was expansive but still quite tame compared to what was coming. Carl Sagan’s 1980 Cosmos TV series on PBS, for example, was hardly despair-inducing. One could contemplate the large universe depicted by Sagan and other pop cosmologists of the time, and, as I noted above, could still fantasize about humans’ someday traversing and conquering it. MWI and other infinite-universe theories had not yet caught on, certainly not at the popular level.

These days, by contrast, MWI and similar “parallel universe” themes are essential elements of pop cosmology, and, perhaps more importantly, are also common in pop culture generally.

Moreover, although technologies based on quantum mechanics (such as lasers) have been around for decades, newer quantum tech such as quantum computing and quantum encryption emphasizes, for the first time, the spookier, multiplicity-of-states aspect of quantum mechanics—the aspect that MWI essentially was devised to explain. Thus, from popular science to tech to popular media culture generally, people are being exposed to the infinite-universe/multiverse idea as never before, and in ever-stronger doses.

The impact of that rising exposure won’t be immediately obvious. There are, and in the coming decades will continue to be, many other drivers of despair, disruption, suicide, and social disintegration in the modern world—drivers such as cultural feminization, mass immigration, and human-displacing AI systems. Trying to disentangle the effect of one of these from the others is going to be challenging, to put it mildly. But, if my hypothesis is correct, “cosmological despair” will weigh more and more heavily and evidently on developed societies—especially among younger people, who will encounter MWI and similarly harsh cosmologies in their formative years, never having had the comforts of older, friendlier worldviews. In other words, if the world is now entering an Age of Despair principally for other reasons, cosmology will keep it there terminally.

*

There probably aren’t very many clear examples, yet, of people taking their own lives as a result of belief in MWI or other toxic cosmologies. However, something like this seems to have happened in the case of Hugh Everett III—the physicist who developed the original version of MWI (“’Relative State’ Formulation of Quantum Mechanics”) as his Princeton PhD thesis in 1956.

Everett eventually became a financially successful tech entrepreneur and, in most ways seemed normal, being married with children, having friends, and pursuing ordinary hobbies and pleasures that included wine-making and ocean liner cruises. However . . .

Everett firmly believed that his many-worlds theory guaranteed him immortality: His consciousness, he argued, is bound at each branching to follow whatever path does not lead to death—and so on ad infinitum. [link]

Probably at least partly due to this belief, he smoked, drank, and ate with abandon, which ultimately gave him a fatal heart attack in 1982, when he was only 51 years old. In accordance with his wishes, his body was cremated and his ashes were thrown out with other household garbage.

A decade and a half later, Everett’s troubled 39-year-old daughter Liz took her own life even more directly. She left a note to the effect that she wanted her own ashes thrown out with the garbage, so that she might “end up in the correct parallel universe to meet up w[ith] Daddy.”

***